London, Ontario City Council Delays Discussion of Censoring of Pro-Life Flyers

Last week the London City Council met to discuss a proposed by-law that sought to censor the free expression of pro-life activists by banning pro-life flyers that show the truth of abortion through graphic imagery.

They decided not to go forward with this by-law, instead asking city staff to consider legislation forcing pro-life groups to stick their flyers in envelopes with content warnings.

Some councillors, such as Councillor Stephen Turner, correctly acknowledged that a city council cannot regulate the message of pro-life protestors, and voted against referring the proposed bylaw back to staff. Most city councillors voted in favour of this though.

In a few months, we’ll likely see these unconsititutional by-laws come back to London’s City Council as city staff prepare the by-law to force pro-life groups to hide their flyers with trigger warnings. Until then, we need to keep emailing the London City Council to let them know that censoring the pro-life movement is unacceptable.

Please sign our petition demanding that London’s City Council reject any by-law which seeks to censor pro-life protest. It is not the place for governments to step in and exert control over peaceful protest. Thank you for all your help in combatting these attacks on freedom of expression.

Best wishes,

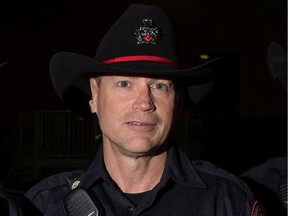

James Schadenberg and the entire CitizenGO Team

P.S. If you have already signed, please share the petition with your friends.

Here’s the email we sent you earlier on this:

| City Councillors in London, Ontario are trying to attack the free speech of pro-lifers and censor the pro-life message. On Tuesday, March 22nd, they will be debating and possibly voting on two proposed by-laws that were explicitly designed to stop the distribution of pro-life flyers that show the reality about abortion through graphic imagery. Please sign this petition demanding that all London City Councillors reject the proposed by-laws which seek to censor the free expression of pro-life activists. SIGN THE PETITION |

Dear Paul,

I have an urgent petition I would like you to consider signing.

City Councillors in London, Ontario are trying to censor pro-life activism and hide the truth about abortion in their city.

There are two by-laws being proposed to London’s Community and Protective Services Committee that were explicitly designed to censor pro-life activists by stopping the distribution of pro-life flyers that show the reality about abortion through graphic imagery.

One of these by-laws proposes fining people for delivering unaddressed flyers to properties that have “No Flyers”, “No Junk Mail”, or “No Unsolicited Mail”. The other bylaw bans the distribution of any flyer that contains graphic imagery, with the example being “dismembered human beings or aborted fetuses” but also bans anything that “may cause or trigger a negative reaction to the health and wellbeing of any person at any scale”. The maximum fine for violating either of these bylaws is $5,000.

These by-laws censor peaceful pro-life protests and deny pro-life activists their freedom of expression, which is guaranteed to them in The Canadian Charter of Rights and Freedoms. On Tuesday, March 22nd, they’ll be debating and possibly voting on these by-laws!

These by-laws were drafted largely as a response to activism that pro-life groups such as The Canadian Centre for Bio-Ethical Reform (CCBR) have done in London in 2020, though these forms of activism have happened in London long before then from groups like London Against Abortion.

Radical pro-abortion groups such as Pro-Choice London and Viewer Discretion Legislation Coalition have been doing everything they can to censor London’s thriving pro-life movement. They’ve been crafting their own petitions and lobbying politicians, with the support of the mainstream media at every step.

So even by-laws that pretend to be neutral by censoring all flyers must be called out for what they are: an attempt by radical-leftist activists to use the government to censor their political opponents.

CCBR has stated that they believe they’re being denied their Charter right to freedom of expression, and other local pro-life groups such as the London Area Right to Life Association have stated the same.

Governments must never ban the distribution of “triggering” images when they depict the harsh reality of evils that are occurring in society.

Images that cause discomfort or even have graphic material have been essential to many protest movements, whether it be ones opposing unjust wars and tyrannical dictatorships, or social evils such as slavery and child labour.

It’s always in the interests of those in power who support such evils to sanitize these evils and keep them invisible. It’s the only way their propaganda can be effective.

This is why there are bubble zones around abortion clinics throughout much of Canada, and why London is trying to punish anyone who dares to show an image of an aborted baby in public. These images decimate the narrative that abortion is merely a “women’s choice about her body” and shows it for what it really is: the brutal killing of an unborn human child.

We must stand up for our right to freedom of expression, and not let governments decide which peaceful protests are allowed, and which are not.

Thanks for all you do,

James Schadenberg and the entire CitizenGO Team